I therefore ran a simple study to quantify the within and between participant variation in letter production, as measured using the lognormal parameters nbLog and SNR/nbLog. A quick reminder; SNR is the signal-to-noise ratio and is a measure of the model fit; nbLog is the number of lognormal curves needed to fit the data; and the ratio of the two takes the model fit and penalises it by how hard the model had to work to get there. The data are here if you care to play.

Participants viewed each letter of the alphabet, one at a time on a screen. Their job was to simply write that letter on a Wacom tablet where I could record the 2D kinematics of their movements. People saw each letter 10 times in a fully randomised order for a total of 260 trials.

Note: what is coming is entirely exploratory. I am literally just poking around to map out what I'm up against given the nature of the DVs. I am still figuring out the right analysis to capture what I want to say, so any thoughts welcome.

Analysis 1: Letter (26) x Trial (10) within-subjects ANOVA, DV=SNR/nbLog

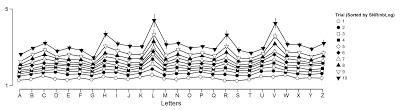

My first instinct is always 'whack it in an ANOVA, see what happens'. The basic design has two within subject factors; Letter (26 levels, the letters A-Z) and Trial (the 10 repetitions). I went in assuming this analysis will vary by letter; the letter L requires fewer strokes that the letter Z, for example and this could readily inflate the SNR/nbLog measure. This is one of the things to quantify, though.Trial is harder; effectively I want to use this factor as a measure of the within-person variability in letter production. My first problem is that 'Trial' is the wrong way to sort the data; it's not much of an experimental manipulation, in that I don't expect 'being in Level 1 of Trial' to have any specific, let alone systematic effect on kinematics. What I've done (and ideas welcome) is to sort the data in Excel by Letter and then nested within that by the value of SNR/nbLog. 'Level 1 of Trial' is now 'the worst performance' and Level 10 is now 'the best performance'. I've made 'Trial' a subject variable, and each Level seems a bit more sensibly comparable across participants.

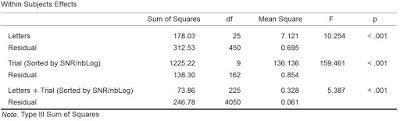

Figure 1. ANOVA table and plot for SNR/nbLog data

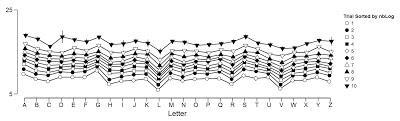

There is a main effect of Letters (SNR/nbLog varies by letter, although not catastrophically for most letters); there is a main effect of Sorted Trial (of course; I kind of force this by sorting the data, although it's not a compulsory result and it tells me that for a given letter, there is a wide range of SNR/nbLogs); and there is an interaction (that within letter/between participants variability varies by letter). Some thoughts; this analysis basically shows the individual variation. On average, across people, the same letter leads to very different values of the DV SNR/nbLog (main effect of Trial) and worse, that variation varies by letter (the interaction).I repeated this with just the nbLog data, because whether how hard the model had to work to fit the data varied within letter is also kind of interesting; see Figure 2. The two main effects and the interaction are significant, the latter at p=.041. There is huge variation; check the scale, from 5 to 25 lognormal curves needed to fit the velocity data.

Figure 2. Plot of means for nbLog

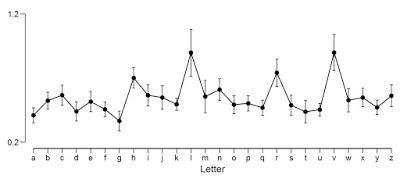

Analysis 2: Letter (26) within-subjects ANOVA, DV=SD(SNR/nbLog)

I like to analyze variability directly too, so for each participant and for each letter I computed the standard deviation across the 10 repetitions and ANOVA'd them across letters. There was a main effect (F(25,450) = 9.915, p<.001).Figure 2. Average standard deviation of SNR/nbLog

The pattern here looks very similar to the main effect of Letters from above; surprise surprise, letters that produced bigger spreads of SNR/nbLog produce higher standard deviations.