Making the rounds on Twitter today is a post by Ravi Parikh entitled “How to lie with data visualization.” It falls neatly into the “how to lie with statistics” genre because data visualization is nothing more than the visual representation of numerical information.

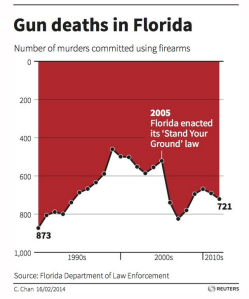

At least one graph provided by Parikh does seem like a deliberate attempt to obfuscate information–i.e., to lie:

Inverting the y-axis so that zero starts at the top is very bad form, as Parikh rightly notes. It is especially bad form given that this graph delivers information about a politically sensitive subject (firearm homicides before and after the enacting of Stand Your Ground legislation).

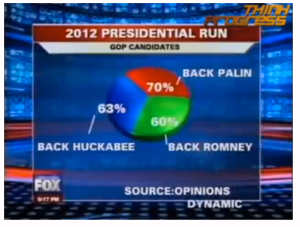

Other graphs Parikh provides don’t seem like deliberate obfuscations so much as exercises in stupidity:

Pie charts whose divisions are broken down by % need to add up to 100%. No one in Fox Chicago’s newsroom knows how to add. WTF Visualizations—a great site—provides many examples of pie charts like this one.

So, yes, data visualizations can be deliberately misleading; they can be carelessly designed and therefore uninformative. These are problems with visualization proper, and may or may not reflect problems with the numerical data itself or the methods used to collect the data.

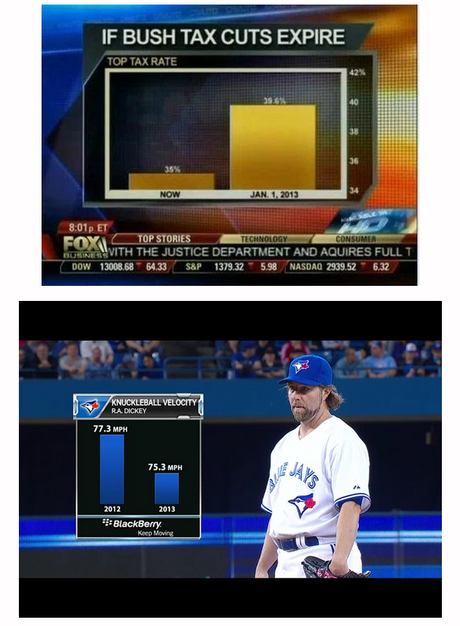

However, one of Parikh’s “visual lies” is more complicated: the truncated y-axis:

About these graphs, Parikh writes the following;

One of the easiest ways to misrepresent your data is by messing with the y-axis of a bar graph, line graph, or scatter plot. In most cases, the y-axis ranges from 0 to a maximum value that encompasses the range of the data. However, sometimes we change the range to better highlight the differences. Taken to an extreme, this technique can make differences in data seem much larger than they are.

Truncating the y-axis “can make differences in data seem much larger than they are.” Whether or not differences in data are large or small, however, depends entirely on the context of the data. We can’t know, one way or the other, if a difference of .001% is a major or insignificant difference unless we have some knowledge of the field for which that statistic was compiled.

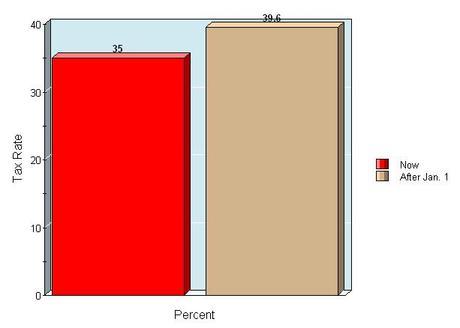

Take the Bush Tax Cut graph above. This graph visualizes a tax raise for those in the top bracket, from a 35% rate to a 39.6% rate. This difference is put into a graph with a y-axis that extends from 34 – 42%, which makes the difference seem quite significant. However, if we put this difference into a graph with a y-axis that extends from 0 – 40%—the range of income tax rates—the difference seems much less significant:

So which graph is more accurate? The one with a truncated y-axis or the one without it? The one in which the percentage difference seems significant or the one in which it seems insignificant?

Here’s where context-specific knowledge becomes vital. What is actually being measured here? Taxes on income. Is a 35% tax on income really that much greater than a 39.6% tax? According to the current U.S. tax code, this highest bracket affects individuals earning $400,000/year and married couples earning $450,000/year. Let’s go with the single rate.

35% of 400,000 = 0.35(400,000) = 140,000

39.6% of 400,000 = 0.396(400,000) = 158,400

158,400 – 140,000 = 18,400

So, in real numbers, not percent, the tax rate hike will equal at least $18,400 to those affected by it. So, the question posed a moment ago (which graph is more accurate?) can also be posed in the following way: is losing an extra eighteen grand to taxes each year a significant or insignificant amount?

And this of course is a subjective question. Ravi Parikh thinks it’s not a significant difference, which is why he used the truncated graph as an example in a post entitled “How to lie with data visualization.” However, I know a couple who are being taxed at this rate (based on a combined income greater than 450k). They have four kids. Over 18 years, the money lost to this tax raise will equal what could have been college tuition for two of their kids. I believe they do think the difference between 35% and 39.6% is significant.

What about the baseball graph? It shows a pitcher’s average knuckleball speed from one year to the next. When measuring pitch speed, how significant is the difference between 77.3 mph and 75.3 mph? Is the truncated y-axis making a minor change more significant than it really is? As averages across an entire season, a drop in 2 mph does seem pretty significant to me. If Dickey were a fastball pitcher, averaging between 92 mph and 90 mph would mean fewer pitches under 90mph, which could lead to a higher ERA, fewer starts, and a truncated career. For young pitchers being scouted, the difference between an 84 mph pitch and an 86 mph pitch can apparently mean the difference between getting signed and not getting signed. Granted, there are very few knuckleballers in baseball, so whether or not this average difference is significant in the context of the knuckleball is difficult to ascertain. However, in the context of baseball more generally, a 2 mph average decline in pitch speed is worth visualizing as a notable decline.

So, do truncated y-axes qualify as the same sort of data-viz problem as pie charts that don’t add up to 100%? It depends on the context. And there are plenty of contexts in which tiny differences are in fact significant. In these contexts, not truncating the y-axis would mean creating a misleading visualization.